Picture this: PagerDuty just woke you up. The billing service is throwing 500 errors, and customer checkouts are failing.

What is your next move?

If you are like most engineers, you are about to open a dozen tabs. You’ll pull up your logging platform, your tracing tool, maybe a security dashboard, and start frantically writing complex query languages (that you half-remember) to hunt for the needle in the haystack.

But what if, instead of all that, you just opened Claude or your VS Code terminal and asked:

"Show me all critical alerts and error logs from the billing service over the past 10 minutes, and cross-reference them with the latest deployment."

And what if... it just gave you the answer?

No hallucinations. No generic troubleshooting steps. Just the exact root cause, pulled directly from your live production data.

Welcome to the future. The Model Context Protocol (MCP) is officially LIVE on CtrlB, and it is ushering in the era of Headless Observability.

The Problem: Prediction Engines vs. Execution Engines

Generative AI and coding assistants like Cursor and Windsurf have revolutionized how we write code. But when it comes to running and debugging systems in production, they hit a massive wall.

Fundamentally, LLMs like Claude or ChatGPT are prediction engines. They are incredibly smart at generating text and code based on their training data, but they cannot execute real-world tasks on their own. By default, an LLM cannot query your database, check your Slack messages, or pull a live metric trace.

Until now, bridging the gap between your AI tools and your live system telemetry meant copying and pasting massive walls of JSON into a chat window. It was slow, prone to token limits and a security nightmare. Before MCP, there was no standard way to connect AI with external tools. Every integration required custom, brittle API glue code.

Your AI was effectively a brilliant detective locked in a room without access to the crime scene.

Enter MCP: The "REST API" for AI

To fix this, the industry needed a universal standard. Think of the Model Context Protocol (MCP) as the REST API standard, but built specifically for AI agents.

Originally introduced by Anthropic as an open standard, MCP provides a unified protocol that enables AI models to interact seamlessly with external tools, systems, and data. It transforms LLMs from simple text generators into action-performing agents.

Under the Hood: How MCP Works

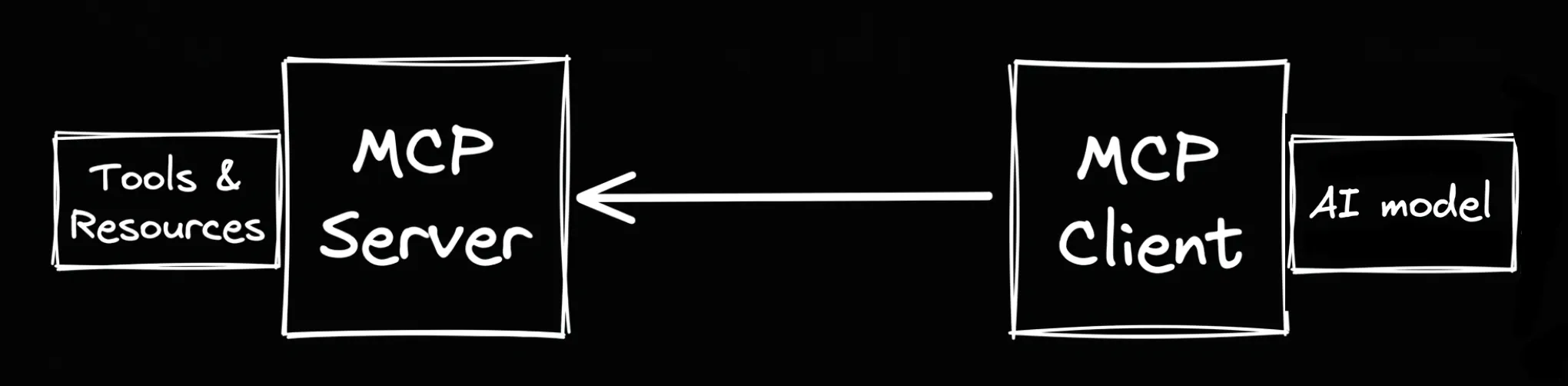

MCP utilizes a clean Client-Server architecture:

- The Client: Your AI application or IDE (like Claude Desktop, Cursor, or VS Code).

- The Server: A lightweight backend service (like the one CtrlB just launched) that exposes your private data and capabilities securely.

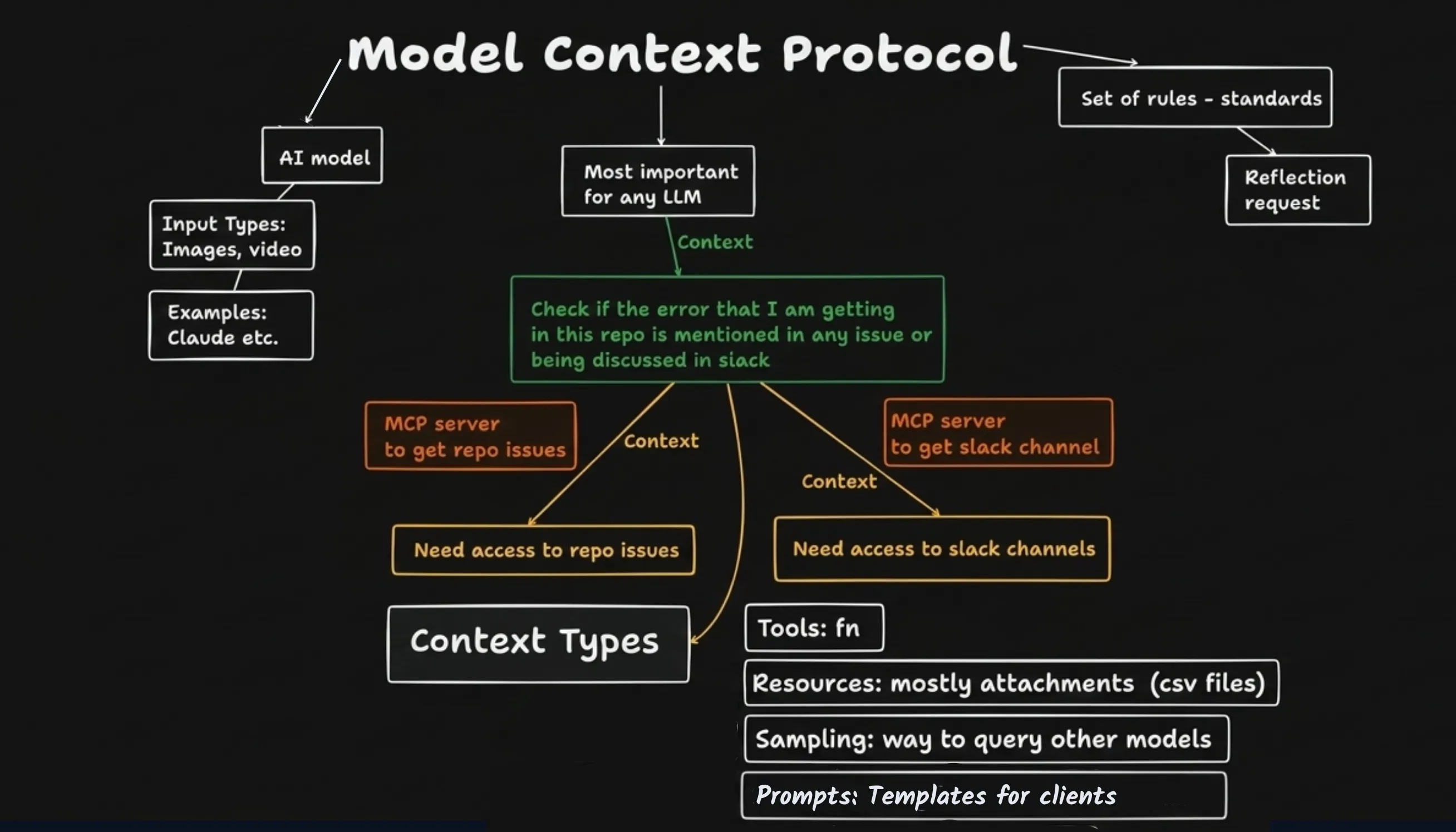

Building an MCP server is surprisingly simple for developers—you write normal backend functions and expose them to the protocol. MCP then supplies context to the AI model through four powerful primitives:

- Tools (Functions): These enable the AI to execute tasks. For example, CtrlB exposes tools like query_logs(service, timeframe) or fetch_security_alerts(). The AI decides when and how to call these tools based on your natural language prompt.

- Resources (Data): Structured access to static or dynamic data. This allows the AI to read your database schemas, configuration files, or raw log streams directly.

- Prompts (Instructions): Reusable templates that standardise how the AI should behave or format its requests when interacting with specific domains.

- Sampling (Model Orchestration): A more advanced feature allowing the server to request LLM completions through the client, enabling complex, multi-step agentic workflows securely.

What is "Headless Observability"?

By its very definition, "headless" means separating the core backend data engine from the presentation layer (the UI). For years, observability platforms forced you into their proprietary, expensive, and rigid dashboards.

CtrlB breaks that mold. Because we are a headless observability engine, we give you the freedom to consume your telemetry exactly how you want to:

- Bring Your Own Visualization (BYOV): CtrlB integrates seamlessly with external visualization platforms like Grafana, Apache Superset and Preset. You get all the power of CtrlB's petabyte-scale backend without having to rip out the dashboards your team already knows and loves.

- Machine-Native Consumption (via MCP): For the times you don't want to build or stare at a dashboard, our new MCP servers allow your AI agents to query, analyze, and understand your system's telemetry natively.

Your AI is no longer just a code generator. It is now your lead incident investigator.

How CtrlB Supercharges Your AI Agents

With our new MCP integration running in production, you can now seamlessly connect CtrlB to the tools your team already lives in (Claude, Cursor, Windsurf, VS Code).

But connecting AI to your data is one thing; doing it at an enterprise scale is another. When you use MCP with CtrlB, you unlock our core platform superpowers:

- Petabyte-Scale Context: CtrlB’s architecture separates compute from storage. Your AI isn't just looking at the last 15 minutes of data; it can instantly search months of historical logs to establish baselines and find anomalies.

- Zero Data Egress Taxes: Querying massive datasets usually results in terrifying cloud bills. Because CtrlB operates securely within your own cloud boundary, your AI can analyze infinite data without you paying a single cent in egress taxes.

- Security: SecOps and SREs can breathe easy. Your data never leaves your environment, and CtrlB maintains strict access controls over exactly which Tools and Resources the AI can utilize.

The Future Potential of MCP

We are only scratching the surface. The widespread adoption of MCP is paving the way for a massive shift in software development.

Soon, we will see thriving tool marketplaces where developers can plug and play MCP servers into their AI assistants. We will see AI-driven automation where agents autonomously monitor systems, detect anomalies, write the patch, and deploy the fix all while logging their actions securely.

Let’s put this into perspective. Imagine you just connected your Cursor IDE to CtrlB via our new MCP server.

Instead of writing a complex regex query to find an issue, you simply type: "Why are database queries from the user-auth pod timing out and are there any active security alerts associated with those IPs?"

In seconds, the AI invokes the query_logs tool, reads the auth_schema resource, checks the security alerts, and tells you that a newly deployed config file is causing a connection leak.

It’s not magic. It’s Headless Observability.

Take control of your observability

Join thousands of developers using CtrlB to monitor their systems with complete confidence and extreme precision.

Seamless Integration

Connect your entire stack in minutes with zero friction.

Real-time Analytics

Sub-second latency on all queries. No waiting.

Enterprise Grade

SOC2 Type II compliant, secure, and highly available.